前两天刚刚确认自己无法参加EC-final,那么也就说明我大约一年半的ACM生涯就此结束。首先关于这次EC-final名额的问题,本来我们队是申请成功的,但是由于主办方的种种原因,教练出于学校声誉的考量,决定放弃申请的名额。我们队也可以理解,说到底这个名额本来也是出乎我们意料的,只是多训练了半个月,也没什么遗憾。

实际上我参加过较大的比赛只有一场ICPC区域赛、一场CCPC和一场CCPC邀请赛,相对于其他ACMer来说是低于平均水平的,不过最后区域赛打银滚出算是实现了最基本的目标。其实主要是我在大一入学的时候没有规划好大学生活,参加了一些奇奇怪怪的组织,没有集中精力在ACM上。如果我有好好规划的话,可能就可以像sdj那样大二退役,或者多一些时间冲金,或者可以去实习,或者去研究一些更奇怪的东西,总之可以省下很多时间。

在区域赛的时候,队友AC了第五题,已经稳银了,还剩四十分钟。我开始敲第六题,如果AC的话可能在金尾;然而没有如果。赛后我们也一直抱有遗憾,不过还是尽力去做了,也把能做到的都做到了,这几个月的集训我们队各方面都提升了很多,我的队友是我在大学生活中一起经历过最多考验的人。

我在刚参加校队集训的时候,要填写一个报名表,其中有一项是“想达到什么目标”。我写的是“进world final”,后来我才知道swb学长也是这样写的。不过我和他都没能实现这个愿望,而且我比他差得更多。今年CSU的ACM生源更好,有许多参加过OI的大佬,我想应该不出两年这个目标就能实现了。

从高中开始我就一直有参加算法竞赛的梦想。当时是参加“翱翔计划”的时候听老师介绍了清华附中、人大附中等超级厉害的高中同学去IOI参赛的场景,然后我也有了去参赛的梦想,可惜我的高中并没有这种组织。到了大学终于圆了梦想,虽然没有出国,但是也是很有意思的经历。

大学期间,为了ACM我放弃了很多,“ACMer是没有假期的”。但是相对地收获了更多。在赛场上,排除一切杂念的能力,相信自己相信队友的信念,静心阅读英语的心境,都是取得成绩的必要因素。更重要的是平时的付出,每周在机房的十几个小时,积攒AC题目和与队友的配合技巧,以这些一点一滴的付出应对来之不易的比赛机会。

日后谈就到这里,接下来是回忆。

我第一次参加训练是在大一暑假,虽然有很多同学大一寒假就开始训练了,但是我得知消息总是比别人要晚一些。虽然我在高中的时候学过一点C语言,但是其实还是要从头开始,学习C++有哪些头文件,分别要在什么时候引用。相对于那些有OI经验的同学,我对数据结构的理解比他们要差得多,他们都知道栈、队列这些结构在实际应用中要怎么操作,而我就只知道一些概念。不过靠我以前VB调库的经验,还是度过了这段时间。

到了大二上学期,因为我不太清楚学校选择参赛队员的规则,所以我在十一期间回了趟家,导致我没能参加那一学期的ICPC和CCPC。训练时坐我旁边的cdn学长,还有sdj、qt、lfw等同学都参加了。之后cdn和sdj退役,qt、lfw和我继续打。其实如果我去参赛了的话可能也会选择退役;然而没有如果,才有了接下来一年的经历,不知道算不算幸运。

大二下,我参加了CCPC邀请赛,当时和wwk、lfw是队友,拿了我(人生中?)第一块国家级的银奖。

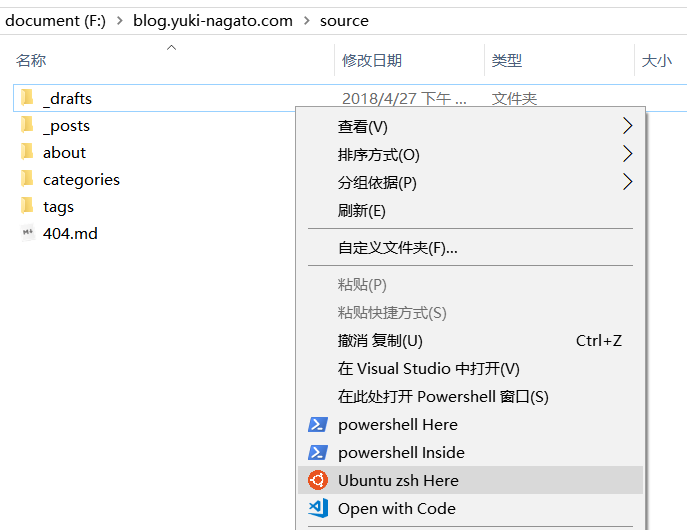

然后是大二暑假,我重新和wwk、wy组了队,每天打多校。多校题比较难,我们几个天天自闭,也提升了很多,把以前模糊的高级知识点都重新梳理了一遍,也开始系统整理个人模板,就是在我博客里的这篇文章。

大三上,也就是这学期,我参加了ICPC和CCPC,一银一铜,都多多少少有些遗憾,但是在我们这个层次的选手比赛有遗憾也是正常现象。一年多的训练终于有了成果,也算遗憾不大吧。得知退役后,我们还是打了一场字节跳动网络赛,题目很难,机房里的队伍都是一题,这就是我们的退役赛。

长沙的严寒酷暑总是令人恐惧,如果我在假期能回家的话,大学生活一定比现在要轻松得多。不过即使再给我一次机会,我也一定会选择训练。我深知自己做不成太多事情,实际上大学除了比赛也没有再做什么事情,但是这是我最热爱的事情,也是我认为我做过的最有意义的事情,也是我感触最深的经历。

之后还有半年的时间准备保研,我大概要一边写写项目一边搞搞科研,所以即使退役了也觉得还有事情要做……

我由衷感谢我的队友和其他一起训练的同学。

最后,我把之前几场比赛后写的总结贴上来吧,作为更详细的记录。